Caragh Medlicott investigates the next great digital data earthquake: the development of mind reading technology.

Back in the archaic days of pre-1991, humans existed in just one place. Personhood was restricted to the physical being, to flesh and blood; people started at their head and ended at their toes. But in 2019, this is no longer the case. Not only are select fragments of our identity scattered across the web in the form of social media – our invisible data selves are polished and refined with every browse, click and scroll. With each of our digital actions we provide attuned algorithms with yet another colour with which to paint our data-based portrait. The recent Cambridge Analytica scandal was the first big event to pique public concern around the impact of applied data. Not only can it accurately predict our actions, it is effective in influencing what we do, what we buy – and now, apparently, how we vote.

As our device use turns habitual, the line between our physical selves and the digital personas we project can begin to blur. Certainly in the eyes of organisations, our data identity provides far more insight than the inexact target audience models of marketing past. All that considered, the recent developments in mind reading technology set more than a few alarm bells ringing. The data rights debate continues to rattle through the online world, and rightly so, but this new tech provides grounds for ethical repercussions on an unprecedented scale.

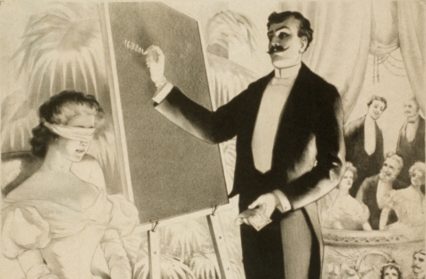

What we think and what we say are often very different things. For example, I may outwardly be engaged in a heated debate regarding solipsism, but inwardly I’m more likely to be thinking about nacho cheese (which is one reason telepathy would pose a serious threat to the strength of my philosophical arguments). As seen with the data issue, trying to regulate with a moral conscience after the fact is difficult. Campaigners have come up against huge blockers in their efforts, and finding clarity on just how much of your data is stored tends to be a long-winded and intimidating process. Tech companies are deflective and cagey when it comes to questions about privacy – just look at current stories on the transcribing of private audio messages. With just a few top dogs racing to be the first to launch new products, ethical implications are quick to be placed on the backburner. This is why it is paramount moral riddles and privacy safeguards are worked out prior to public release. Especially when it comes to the most personal and impenetrable space we inhabit: our own minds.

Though many are quick to be outspoken about the latest digital advancements, it’s important not to let fear tip the overall balance of our considerations. Especially when it comes to sci-fi-sounding tech, feelings of anxiety are apt to consume our rationale. The greater level of accessibility made possible by even the most ostensibly off-putting concepts are certainly worth a mention. New York’s Columbia University is currently at work on a “vocoder” device – a tool that seeks to make auditory expression fluid and easy for those without speech. Brain waves are channelled into a voice synthesiser to allow those who have lost their voice a new way of communicating. Though in its very early stages, there’s no denying the refreshing sense of good motivating research of this kind. Still, it’s hard to believe that similar work being undertaken by Facebook and Elon Musk’s Neuralink retains such a conscience. In the words of many a comic-book hero – this tech would be disastrous if it fell into the wrong hands. The trouble is, those wrong hands are more likely to be wearing the latest digital watch than they are to be clawed and blood-stained.

Left unchecked, the ethical implications of unregulated mind reading tech are so huge they could fill a very sizable library. We’ve already seen how data manipulation can breach the integrity of democracy – yet it is hard for us to truly conceive of the power and leverage facilitated by access to human thought. Cambridge Analytica identified psychological weaknesses – specifically, points of fear – to single out voters in swing states, who could then be turned to vote for Trump. If that can be done with the data collated and analysed from a reasonably lightweight personality test, who knows what vulnerabilities could be exploited through access to the mysteries of human thought. The development of this Orwellian technology would give a whole new definition to the “thought police” ideology that’s recently transferred into the vocabulary of the alt-right. It inevitably becomes both a legal and political issue – how do the judicial, education and healthcare sectors operate in a world where mind reading is possible?

Of course, the issues brought about by knock-on consequences aren’t the direct responsibility of anyone in the profit-oriented tech industry. Facebook’s old motto was, quite literally, to “move fast and break things”. The brain chip being developed by Neuralink – or more accurately, brain ‘thread’ – is intended to break down the walls between computer and mind. While at this early stage, the official line from Neuralink is that their first goal is to cure “brain-related” diseases, Musk has already gone off-piece to speak of his vision of human and artificial intelligence “symbiosis”. The robot-handled operation required to insert the thread is incredibly complex, and there’s no clarity on whether it will be reversible – this raises the question of whether a one-time opt-in goes far enough in protecting the individual? It seems ironic that sodium thiopental (more commonly known as “truth serum” – as seen in many a spy film) is illegal in most parts of the world specifically because of its ethical ramifications, yet far move invasive technology of this kind can be publicly developed with only a few quickly disappearing techy articles to follow in its wake.

Silicon Valley’s tendency to favour obfuscation over clarity is just one of the barriers which ought to be axed quickly. The question of user consent is already being battled by digital citizens. With the GDPR data sanctions which came into play across Europe last year, it is clear there is a growing concern around user information and clarity. We’ve seen a greater emphasis placed on the importance of informed users.

In days past, it may have been all well and good to use diversion tactics and impenetrable terms and conditions to nudge individuals into unwittingly agreeing to questionable data terms, but under the new regulations there is a clear onus placed on clear and unambiguous consent. It is telling that the most high profile GDPR breach to date has come from Silicon Valley royalty itself: Google. In the earthquake which followed the Cambridge Analytica and #DeleteFacebook drama, the spotlight was quickly moved onto Google who have been quietly watching and tracking us for a long, long time. In fact, it was just last year that Google were forced to admit that external app developers and data companies were allowed to read personal Gmail messages.

This, like so many other troubling issues, was a conscious decision – I don’t for one minute believe that those authorising such access, or burying it in unclear privacy terms, really thought that people would be okay with this. The bottom line is that companies such as Google will always take as much rope as they are given; so long as the layers of complexity in the tech realm make regulation difficult, big corporations will continue to profit (often unethically) from the end user.

Cognitive research and the potential it holds for those with sensory impairment is nothing to be sniffed at – what should concern us, however, is the extent to which research is being held not in the academic field, but the commercial one. While Musk races towards his child’s sci-fi vision for the future and Facebook looks to find yet another way to plug users seamlessly into their suite of addictive apps, it’s time industry experts and ethicists alike align to formulate adaptable and responsible regulations. It’s not an easy task, and with the rise of deep fakes and other technology made with the sole purpose of obscuring objective truth, there is a tricky balancing act to perform – one with many plates to spin. Yet, in a future world where mind reading is more than a party trick performed by Edward Cullen and Charles Xavier, it is an act we must perfect. To fail to do so could lead to the permanent collapse of the private and public sphere, with further implications that, really, just don’t bear thinking about.

Caragh Medlicott is a Wales Arts Review columnist.

Enjoyed this article? Support our writers directly by buying them a coffee and clicking this link.

Enjoyed this article? Support our writers directly by buying them a coffee and clicking this link.